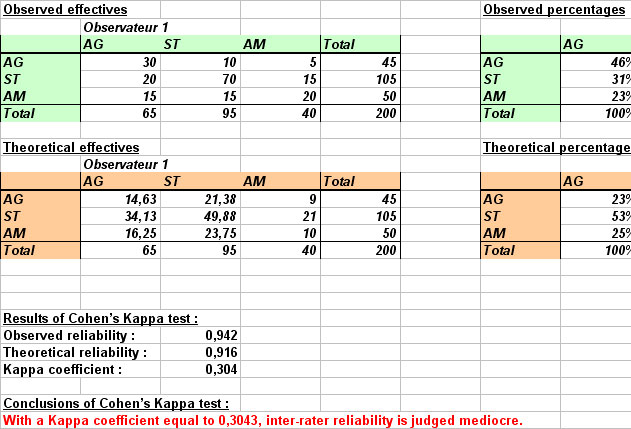

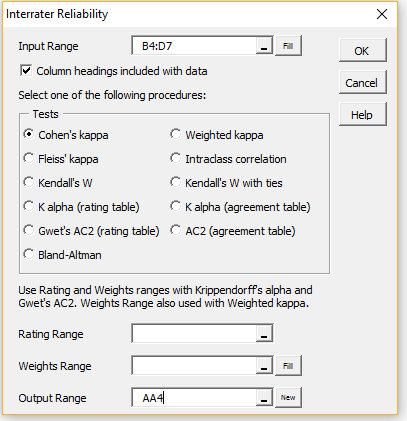

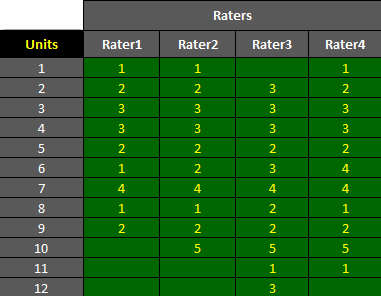

AgreeStat/360: computing weighted agreement coefficients (Conger's kappa, Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) for 3 raters or more

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar